And why today’s complex data challenges make it the smarter, all-in-one solution for modern businesses

What is a

Data Lakehouse?

What is a

Data Lakehouse?

And why today’s complex data challenges make it the smarter, all-in-one solution for modern businesses

The Data Lakehouse Advantage

Anyone who has ever managed a large Data Warehouse knows there comes a tipping point - performance slows, and costs spiral out of control.

Traditional Data Lakes come with a different set of challenges: they lack the enterprise features needed for financial reporting and mission-critical decision-making, such as full data access audits, row-level security, and the AI capabilities modern businesses demand.

A Data Lakehouse bridges these two worlds. It combines the scalability and low-cost storage of a cloud data lake with the structure and governance of a data warehouse - giving you the best of both.

The Data Lakehouse Advantage

Anyone who has ever managed a large Data Warehouse knows there comes a tipping point - performance slows, and costs spiral out of control.

Traditional Data Lakes come with a different set of challenges: they lack the enterprise features needed for financial reporting and mission-critical decision-making, such as full data access audits, row-level security, and the AI capabilities modern businesses demand.

A Data Lakehouse bridges these two worlds. It combines the scalability and low-cost storage of a cloud data lake with the structure and governance of a data warehouse - giving you the best of both.

As an Elite Databricks Partner, we help organisations design, build, and get the most from their Data Lakehouse.

By working alongside Databricks - the driving force behind the Lakehouse - we ensure our clients unlock the full value of Delta Lake, Unity Catalog, and AI-driven performance.

As an Elite Databricks Partner, we help organisations design, build, and get the most from their Data Lakehouse.

By working alongside Databricks - the driving force behind the Lakehouse - we ensure our clients unlock the full value of Delta Lake, Unity Catalog, and AI-driven performance.

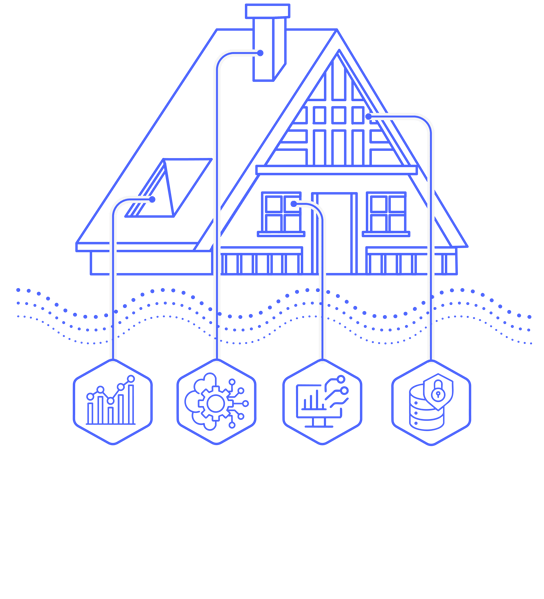

AI-Native Data Lakehouse

The Data Lakehouse has evolved into something far more powerful.

Today’s AI-Native Data Lakehouse integrates built-in AI and machine learning capabilities that transform how businesses operate.

It merges:

-

Independently scalable cloud storage and compute.

-

The organisational structures and management features of a data warehouse.

-

Native AI tools for analytics, automation, and intelligent decision-making.

With support for open formats like Apache Iceberg and Delta Lake, you’re not locked into a single vendor or platform. You can use the best tools for the job - from schema-on-read querying with Apache Spark to fully structured SQL-based querying to match your businesses skillset.

AI-Native Data Lakehouse

The Data Lakehouse has evolved into something far more powerful.

Today’s AI-Native Data Lakehouse integrates built-in AI and machine learning capabilities that transform how businesses operate.

It merges:

-

Independently scalable cloud storage and compute.

-

The organisational structures and management features of a data warehouse.

-

Native AI tools for analytics, automation, and intelligent decision-making.

With support for open formats like Apache Iceberg and Delta Lake, you’re not locked into a single vendor or platform. You can use the best tools for the job - from schema-on-read querying with Apache Spark to fully structured SQL-based querying to match your businesses skillset.

Key Features & Benefits of a Data Lakehouse

From AI integration to cost savings, here’s how a Data Lakehouse transforms the way you manage and use data.

Key Features & Benefits

of a Data Lakehouse

From AI integration to cost savings, here’s how a Data Lakehouse transforms the way you manage and use data.

Native AI Integration

Built-in AI and ML capabilities with vector search, LLM integration, and real-time applications. Deploy AI agents, build RAG systems, and create intelligent automation - all within your Lakehouse.

Breakthrough Performance

Innovations like Liquid Clustering, lighting fast indexing, and intelligent caching deliver up to 7x performance improvements while cutting costs by as much as 40%.

Real-Time Analytics Unlocked

Stream processing, real-time analytics, and event-driven architectures remove batch delays. Process streaming and batch data together for true real-time decision-making.

Enterprise-Grade Governance

With Unity Catalog and advanced governance frameworks, you get centralised metadata, automated compliance reporting, AI-powered data discovery, and fine-grained security controls.

Proven Cost Savings

Separation of storage and compute, intelligent auto-scaling, and the elimination of unnecessary data copies deliver immediate, measurable savings.

Business User Friendly

Natural language queries, AI-powered analytics, and no-code interfaces give business teams direct access to insights - self-service analytics that actually work.

Hydr8

Bring Your Data Lakehouse

Vision to Life - Fast

You’ve seen what a modern Data Lakehouse can do.

Now, imagine having it fully set up, automated, and optimised in a fraction of the usual time.

Hydr8 is our “Data Lakehouse in a Box” - pre-built, Databricks-native, and packed with automation to get you from raw data to AI-ready insights in hours, not months.

Whether you’re starting from scratch or modernising an existing setup, Hydr8 accelerates your journey while keeping governance, scalability, and performance at the core.

Hydr8

Bring Your Data Lakehouse

Vision to Life - Fast

You’ve seen what a modern Data Lakehouse can do.

Now, imagine having it fully set up, automated, and optimised in a fraction of the usual time.

Hydr8 is our “Data Lakehouse in a Box” - pre-built, Databricks-native, and packed with automation to get you from raw data to AI-ready insights in hours, not months. Whether you’re starting from scratch or modernising an existing setup, Hydr8 accelerates your journey while keeping governance, scalability, and performance at the core.